VisionFive 2: the hardware

This post is part of the Upstream RISC-V serie:

- The upstream RISC-V experience: running RISC-V hardware with upstream distros

- VisionFive 2

- BananaPi BPI-F3

The VisionFive 2 hardware: TL;DR

- The board comes with a cute transparent case

- The hardware is relatively fast and stable

- You absolutely need a good power supply, especially if you want to use a NVMe SSD

Hardware assembly

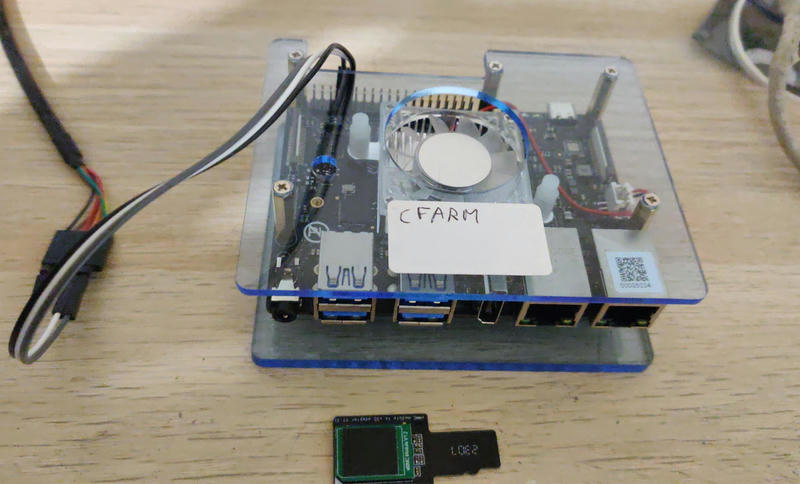

The kit came with a cute transparent case that you have to assemble. Peeling off the protective adhesive can be a bit challenging at first, but it's otherwise straightforward to assemble. On the board itself, you have to apply the thermal pad on the CPU, then attach the fan on top of it (again, it can a bit challenging the first time).

The kit also came with a 16 GB eMMC module that you can use as a replacement for a SD card, and an adapter to be able to plug your eMMC module on a standard micro-SD slot. I guess you could technically use both a SD card and the eMMC module simultaneously on the board, but it has limited usefulness (has anyone tried RAID?)

Regarding the NVMe, I found it difficult to attach a SSD firmly in the M.2 slot, but this is a general woe I have with all M.2 slots on all motherboards. Most SSD vendors don't give you a screw to attach it, and even if they do, it's often not working terribly well. Here, I ended up bending the NVMe so that it stays in place even with no screw. It doesn't feel right, but maybe this is how it's designed to be attached?

The DIP switch to select the boot mode is well-documented elsewhere, but let's include it for completeness. Interestingly, I couldn't manage to load u-boot directly from the eMMC while it works from the SD card, but that will be a topic for the next article.

Finally, you will certainly need a serial console, and again the UART pins are well-documented.

Hardware performance

The VisionFive 2 has a StarFive JH7110 SoC, with 4 SiFive U74 cores at 1.5 GHz.

More than one year ago, I had run benchmarks of the VisionFive 2 (version 1.2A). The numbers are very encouraging: the board is about as fast and power-efficient as a Raspberry Pi 3B/3B+. Crucially, it is also 50% to 75% faster per-core than previous RISC-V boards.

In practice, it feels quite responsive in interactive usage over SSH, where the VisionFive 1 or the HiFive Unmatched always felt a bit slow. I suspect this is combination of reasonable CPU performance and good NVMe performance.

A tale of weird kernel woes with a NVMe drive

When trying out the board initially, I simply used a SD card and left the NVMe slot empty. It was working completely fine.

However, as soon as I tried a NVMe SSD (with a patched kernel because there is still no upstream support), I had weird issues: while a Crucial P3 drive worked correctly, a Samsung 970 EVO Plus drive would fail spectacularly. The system completely locked up shortly after boot, with RCU stalls and hard lockup printed to the serial console:

[ 34.799589] rcu: INFO: rcu_sched detected stalls on CPUs/tasks:

[ 34.805520] rcu: 3-...0: (10 ticks this GP) idle=d754/1/0x4000000000000000 softirq=2411/2411 fqs=1053

[ 34.814830] rcu: (detected by 2, t=5256 jiffies, g=1493, q=1059 ncpus=4)

[ 34.821618] Task dump for CPU 3:

[ 34.824849] task:(udev-worker) state:R running task stack:0 pid:274 ppid:261 flags:0x0000000a

[ 34.834769] Call Trace:

[ 34.837220] [<ffffffff80944056>] __schedule+0x346/0xb0e

[ 48.279586] watchdog: Watchdog detected hard LOCKUP on cpu 3

I initially thought it could be a software issue in the kernel, since the patch series is not yet merged in the upstream kernel. But at this point the patch series was in its 9th iteration and other people seemed fine.

The next logical culprit is the hardware. I had a second Samsung 970 EVO Plus drive and two other VisionFive 2 boards, so I tried all combinations: I got mostly the same lockups in all cases, while all boards were still working fine with the Crucial P3 drive.

At this point, it started to smell like a power supply issue: maybe the Samsung SSD was simply consuming more power than the Crucial SSD? So I tested several power supplies and got interesting results:

- Akashi smart charger 5V/2.4A using 230V, model ALT2USBACCH: kernel stalls as soon as the NVMe drive is present, even if not using it at all

- ALLNET USB charger 5V/3A using 230V, model KA1803A-EU: works fine when the NVMe drive is present and unused, but produces kernel stalls when trying to actually read/write data from the NVMe

- my phone's power supply at 5V/2A (but 5V/6A in "WarpCharge mode", whatever it is), using 230V, model WC0506A3HK: works correctly in all cases, even when actively using the NVMe

Clearly, the rating of the power supply had a big impact, and the board seems to be able to make use of voltage above 5V.

I ended up buying a laptop-class USB-C power supply, a 45 W Bluestork NB-PW-45-C, and I had absolutely no NVMe issue since then.

Conclusion

While we are still very far from mainstream RISC-V adoption outside of the embedded space, this is a good board to start tinkering with Linux on RISC-V. In particular, this is the first RISC-V board with both reasonably good performance and reasonably good upstream software support. The rest of the serie will be dedicated to software support.